Documentation Index

Fetch the complete documentation index at: https://docs.syntblaze.com/llms.txt

Use this file to discover all available pages before exploring further.

Int is a standard library value type (implemented as a struct) in Swift that represents a signed, two’s complement integer. By default, Int is platform-dependent: it is guaranteed to be the same size as the native word size of the target architecture. On 32-bit platforms, Int is equivalent to Int32; on 64-bit platforms, it is equivalent to Int64.

Bounds and Memory

BecauseInt is platform-dependent, its minimum and maximum representable values are accessed via static properties rather than assumed constants.

Literal Representation

The Swift compiler infersInt as the default type for integer literals. Literals can be expressed in decimal, binary, octal, or hexadecimal formats using specific prefixes. Underscores can be injected for readability without affecting the underlying compiled value.

Explicitly Sized Variants

WhileInt is the recommended default, Swift provides explicitly sized integer structs for strict memory layouts, network protocols, or C-interoperability. Swift is strongly typed and does not support implicit type coercion; explicitly sized integers do not implicitly convert to Int or to each other.

- Signed:

Int8,Int16,Int32,Int64 - Unsigned:

UInt,UInt8,UInt16,UInt32,UInt64

Overflow and Safety Mechanics

Swift prioritizes memory safety by trapping (triggering a runtime crash) if an arithmetic operation exceeds the allocated memory bounds of theInt type. To perform two’s complement wrap-around arithmetic without trapping, Swift requires explicit overflow operators.

&+ (addition), &- (subtraction), and &* (multiplication).

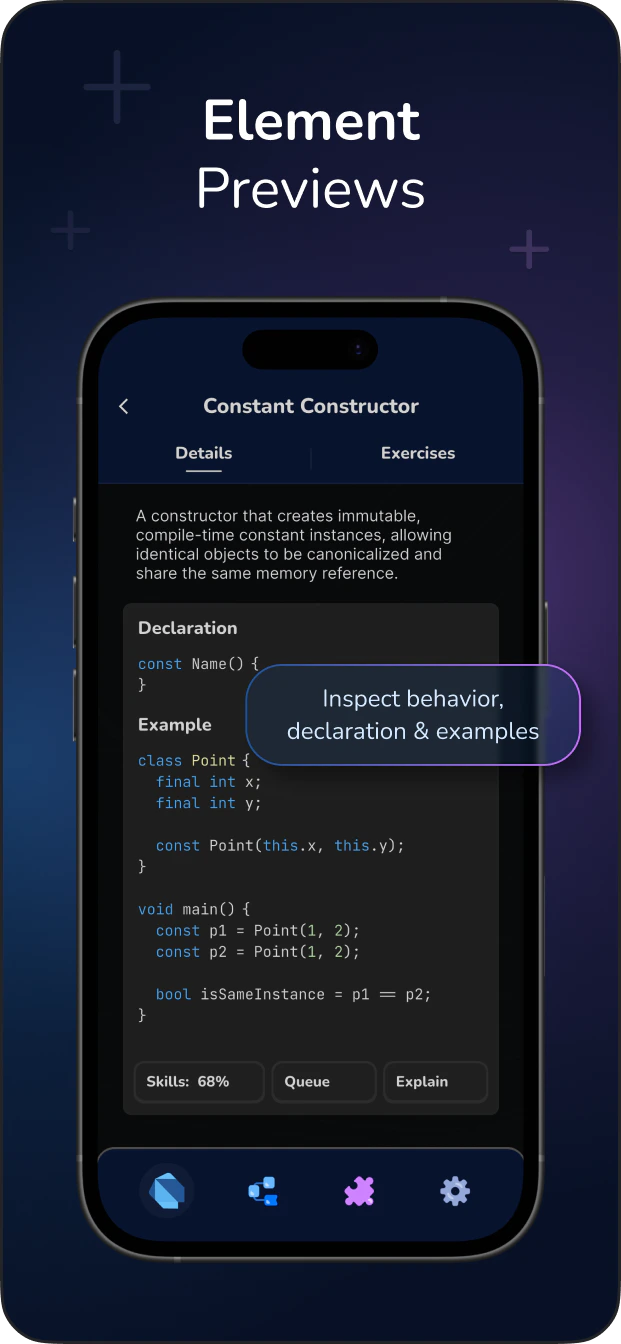

Master Swift with Deep Grasping Methodology!Learn More